Bentley’s most popular reality modeling applications

What is the Reality Modeling WorkSuite?

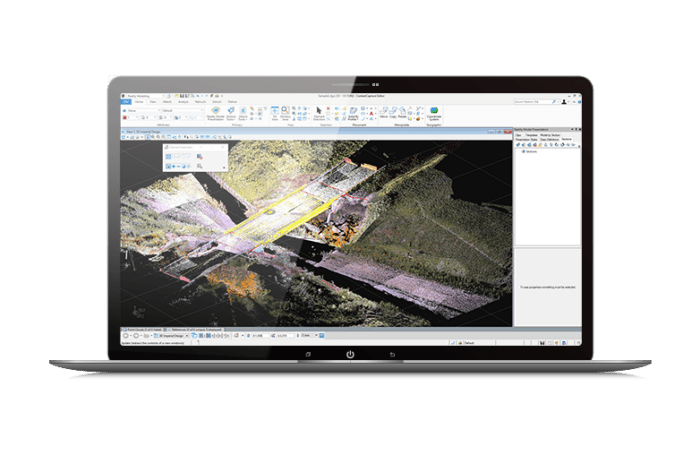

- iTwin Capture Modeler

-

Produce 3D models of existing conditions for infrastructure projects, derived from photographs and/or point clouds. These highly detailed, 3D reality meshes provide precise real-world context for design, construction, and operations decisions throughout the lifecycle of a project. Develop precise reality meshes affordably with less investment of time and resources in specialized acquisition devices and associated training. You can easily produce 3D models using photos taken with an ordinary camera and/or LiDAR point clouds captured with a laser scanner, resulting in fine details, sharp edges, and geometric accuracy. Dramatically reduce processing time with the ability to run two iTwin Capture Modeler instances in parallel on a single project.

- Reality Data Management

-

Reality Data Management is a cloud service for storing, managing, and sharing reality data. Better collaborate when you share visuals of the 3D reality mesh with your teams. Reality Data Management, a cloud-based service, extends Bentley’s ProjectWise connected data environment to securely manage, share, and stream reality meshes, and their input sources, across project teams and applications increasing team productivity and collaboration. It enables you to stream large amounts of reality modeling data without the need for high-end hardware or complex IT infrastructure. Reality Data Management is accessible through Bentley’s software applications, such as MicroStation, Bentley Descartes, and much more. UAV companies, surveying, and engineering firms leveraging reality modeling in-house can quickly access their 3D reality meshes generated with iTwin Capture Modeler.

Produce 3D models of existing conditions for infrastructure projects, derived from photographs and/or point clouds. These highly detailed, 3D reality meshes provide precise real-world context for design, construction, and operations decisions throughout the lifecycle of a project. Develop precise reality meshes affordably with less investment of time and resources in specialized acquisition devices and associated training. You can easily produce 3D models using photos taken with an ordinary camera and/or LiDAR point clouds captured with a laser scanner, resulting in fine details, sharp edges, and geometric accuracy. Dramatically reduce processing time with the ability to run two iTwin Capture Modeler instances in parallel on a single project.

Reality Data Management is a cloud service for storing, managing, and sharing reality data. Better collaborate when you share visuals of the 3D reality mesh with your teams. Reality Data Management, a cloud-based service, extends Bentley’s ProjectWise connected data environment to securely manage, share, and stream reality meshes, and their input sources, across project teams and applications increasing team productivity and collaboration. It enables you to stream large amounts of reality modeling data without the need for high-end hardware or complex IT infrastructure. Reality Data Management is accessible through Bentley’s software applications, such as MicroStation, Bentley Descartes, and much more. UAV companies, surveying, and engineering firms leveraging reality modeling in-house can quickly access their 3D reality meshes generated with iTwin Capture Modeler.

Reality Modeling WorkSuite

- Create, share and consume with one solution

- Generate engineering-ready geometrical models

- Produce 3D meshes with high quality texture

- Automate 3D mesh generation workflow

*Prices vary per region. For more options, see licensing and subscriptions section.

User Quote

With these data capture methods, the annual potential savings by the government is at least SGD 5 million with a refresh cycle of once every two years.

— Hui Ying Teo, Senior Principal Surveyor, Singapore Land Authority

Featured User Stories

Khatib & Alami

Digital Twin Improves Plan for Flooding

ContextCapture and LumenRT helped improve efficiencies, reduce costs, and deliver the project ahead of schedule.

AEGEA

Brazil’s Largest 3D Sanitation Map (Digitalization Of Rio De Janeiro)

Leveraging ContextCapture and Bentley’s open modeling applications, Aegea processed a total of 156,000 drone-captured photos to generate a 3D reality mesh and digital twin across 13 Brazilian states.

MRT Jakarta Develops Sustainable Transport

Using Bentley’s Digital Twin Applications Saved 10% in Working Time and Reduced Hard Copy Submissions by 90%.

With AssetWise and SYNCHRO, PT MRT Jakarta expects to minimize construction costs and schedule overruns to keep the project on target.

FAQs

Yes. iTwin Capture Modeler is the most versatile solution on the market, and automatically extracts details from any resolution photos. You can properly register using the control points in your datasets.

The most popular fisheye cameras (GoPro, DJI…) are supported in our camera database. 360 cameras are not supported 3D reconstruction of process. They usually result from a merge of planar images that can be used as individual inputs to serve 3D reconstruction.

Yes. Most of the camera manufacturers’ RAW formats are supported, but currently only the 8-bit channel is used.

Yes. iTwin Capture Modeler accepts videos as an input in MP4/WMV/AVI/MOV/MPEG formats, and automatically extracts a frame according to a user-defined period.

Mask creation or color equalization can be executed prior iTwin Capture Modeler processing without any problem. But all geometric alteration (crop, rotate, distort…) of images is prohibited as it will cause failures.

Yes. You can import an OPT file, or add a camera to your database and input its specific calibration parameters, such as distortion parameters, principal point, and focal length.

We recommend that every part of the scene is captured in at least 3 neighboring photographs. The overlap must then be more than 60% in all directions.

We recommend using a camera with a reasonable sensor size (in mm) with a fixed focal length. Different camera properties in a single project are well handled by iTwin Capture Modeler but it is recommended to remain as much as possible in same “camera conditions” all along the capture to ensure a robust adjustment of all parameters.

Ground Control Points (GCP) are not mandatory but highly recommended to accurately georeference the model, correct drift on corridor acquisitions, increase precision on the altitude, register different datasets in the same project, assess precision of aerotriangulation in formal reports.

It is always better to have a complete and accurate geotagged dataset. But in case information is missing, iTwin Capture Modeler can still process imagery alignment and ensure geo-registration based on what is available. The quality of geo-registration will be linked to the accuracy of available geotags.

No information of this kind is required. However, if images are listed in an external columned file onboarding 3D-coordinates, it will help and accelerate imagery alignment.

We offer a comprehensive acquisition guide that describes all the best practices to acquire photos for a specific purpose. It is possible to acquire verticals through a first flight, and then acquire oblique and add both to the same project.

Not for now. We believe that our job is to provide the best processing software, and we cooperate with major UAV manufacturers who already have their own mission planning solutions.

The software processes all images regardless of orientation, including oblique imagery, in the same way.

There are several ways to scale and geo-register a model: through geotags embedded in the photos, ground control points, or by adding manual tie points in the photos and defining a scale constraint.

Static parts will be reconstructed. Moving parts (cars, people…) will not iTwin Capture Modeler proposes bounding box feature to avoid undesired background reconstruction.

iTwin Capture Modeler on-boards a touch-up module allowing basic clen-up operation (hole-filing, floating parts removal, surface flattening…). It also allows OBJ-format export that will be supported by all 3D-edition tools. Afterr being touched-up in third party, reality mesh can be imported back in iTwin Capture modeler for update.

Reality meshes in 3Dtiles format can be manipulated, classified, and annotated in MicroStation, and Bentley iTwin Capture Web Viewer.

The global accuracy is about 1-2 pixels (resolution=projected size of a pixel on the scene, also called Ground Sampling Distance for aerial acquisitions) in a plane perpendicular to the acquisition, and 1-3 pixels along the main acquisition direction.

Yes, absolute accuracy of the reality mesh will increase if input data (camera positions) are accurately defined.

Every acquisition process may benefit from a specific camera system. However, all cameras, from a mere smartphone to highly specialized aerial multidirectional camera systems, are supported by iTwin Capture Modeler. What is important is the capture pattern, the resolution (projected size of pixels on the scene), the sharpness of the photos, and a fixed focal length.

Definitely! This will help you to enliven any captured context in minutes. 3SM will be a more optimized format for such a purpose.

Urban area models generated by iTwin Capture Modeler are assimilated to LOD3 CityGML but do not come with any semantics.

Georeferenced 3D models can be overlaid with laser scans. This will provide users to get the best of both worlds, either to complete a LiDAR acquisition with photos, or to extract more details and increase precision for the same area.

iTwin Capture Modeler produces multiresolution meshes in KML format, which can be directly loaded into Google Earth.

OpenCities Map, Esri’s ArcGIS, SuperMap, and more generally, any 3D GIS or visualization software compatible with a multiresolution-tiled format (OpenCities Planner, Unity 3D, OpenSceneGraph, Eternix’ BlazeTerra, etc.).

Web-ready 3SM and Cesium 3D-Tiles will be compatible with iTwin JS web viewer.

All V8i SS4, CONNECT and DGNDB platform compatible products will support the 3SM and 3MX format. Descartes, Map, ABD, OpenRoads ConceptStation, etc.

iTwin Capture Modeler can export an STL or OBJ format, widely accepted by 3D printers.

This is about 150MB for a similar project. 100 times lighter than a colored LAS point cloud and 22 times lighter than POD format.

Yes. iTwin Capture Modeler can produce models in LAS, LAZ, OPC and POD point cloud formats.

For communication purposes, LumenRT will do the job quite nicely. For more technical analysis, OpenFlow brand will be optimized.

MicroStation loads the Spatial Reference System (SRS) used to produce the 3SM file and references the model accordingly.

In iTwin Capture Modeler , there is an “Adjust photos onto Pointcloud” feature that automatically runs alignment before 3D-reconstruction.

Yes, spatial reference system is selected at production stage by the user in a dedicated library.

Bentley iTwin Reality Data Viewer is the most suited path to share with stakeholders. It will display 3DTiles hosted on Reality Data Management, allowing photo-navigation, annotation, and permission management.

Clash detection can be done in MicroStation (on extracts of the mesh), or on point clouds in various solutions but not in a viewer, either web or desktop.

This is fully automatic as far as the input datasets are suitable (overlap, sharpness, optical properties, etc.).

Yes. There is a report at the end of the AT, containing the various RMS values as well as processing parameters. Quality metrics are also viewable in the 3DView.

The production time is considered as clock time. The average observed production speed, on suited workstation, is about 20 Gpix per Engine per day.

Either through control points or geotags with the photos.

Through a dedicated UI in the software. You can also load them through a text file and then identify them in your photos.

Either by georeferencing the model, or by adding manual tie points with a distance constraint in the editor.

Yes, Bentley Descartes or iTwin Capture Manage & Extract are dedicated to this type of application.

iTwin Capture Modeler can perform such operations. Feature extraction relies on AI-trained models available on dedicated Communities page. Ground extraction is one of them.

Yes, using the 3D viewer which is included in iTwin Capture Modeler. Users can measure coordinates, distances, height differences, areas, and volumes. In the web viewer, only coordinates, distances, and height differences can be measured.

Volumes are calculated by refencing either a mean plane created through the georeferenced selection polygon or to a custom plane at a specific height.

No. This is what makes iTwin Capture Modeler so unique. City or bridge models can easily reach dozens of Gb on a hard disk and be streamed through a web or local server, thanks to the multiresolution architecture and the optimization of the mesh.

Photo acquisition! The reconstruction process is truly straight forward when the photos are appropriate: resolution, sharpness, overlap.

The software applies to any photo dataset, aerial, ground, outdoor, indoor, so long as the objects in the scene are static (if moving too much they will be automatically removed). The best practice for shooting photos indoors, is to walk sideways, back to a wall and shoot photos in multiple directions to the front (slightly upwards, downwards, rightwards, leftwards). The acquisition guide provides more information on this procedure.

iTwin Capture Modeler | System Requirements

Minimum

8 GB of RAM, NVIDIA, AMD or Intel GPU, Microsoft Windows 10/11 (64 bit) or Microsoft Windows Server 2012/2016/2019 (64 bit)

Recommended

64 GB of RAM, NVIDIA GeForce RTX 2080Ti GPU, Intel 9-4.0GHz CPU, Microsoft Windows 10/11 (64 bit) or Microsoft Windows Server 2012/2016/2019 (64 bit)

Browser Compatibility

Edge, Chrome, Firefox

iTwin Capture Modeler | Technical Capabilities

Input

- Multiple camera project management

- Multi-camera rig

- Visible field

- Infrared/thermal imagery

- Videos

- Laser point cloud

- Surface constraints: imported from 3rd party or automatically detected using AI

- Metadata file import

- EXIF

Calibration / Aerotriangulation (AT)

- Automatic calibration / AT / bundle adjustment

- Parallelization ability on iTwin Capture Modeler

- Project size limitation

- Control points management

- Block management for large AT

- Quality report

- Laserscan/photo automatic registration

- Splats display mode

Georeferencing

- GEOCS management

- Georeferencing of generated results

- QR-Codes, April tags, and Chili tags: Ground control points automation

Scalability

- Tiling

- Parallel processing possible on two computers

Computation

- GPU based

- Multi-GPU processing based on Vulkan (optional)

- Background processing

- Scripting language support / SDK

Editing

- Touch-up capabilities (export/reimport of OBJ/DGN)

- 3D Mesh and orthophoto integrated touch-up capabilities

- 3D mesh and orthophoto touch-up capabilities through third party application

- Orthophoto visualization

- DEM / DSM visualization

- DTM extraction

- Cross-sections

- Contour lines (with Scalable Terrain Model)

- Point cloud filtering and classification

- Modeling feature

- Support of streamed reality meshes

- Create scalable mesh from terrain data

- Volume calculation

Output and Interoperability

- Multiresolution mesh (3MX, 3SM and Cesium 3D Tiles)

- Bentley DGN (mesh element)

- 3D CAD Neutral formats (OBJ, FBX)

- KML export (mesh)

- Esri I3S / I3P

- Other 3D GIS formats (SpacEyes, LOD Tree, OSGB)

- 3D PDF

- AT result export (camera calibration and photo poses)

- DEM / DSM generation

- True orthophoto generation

- Blockwise color equalization

- Point cloud (LAS, LAZ, and POD)

- Input data resolution texture mode

- AT quality report

- Animations (fly-through video generation)

- QR code: 3D spatial registration of assets

Viewing

- Free iTwin Capture Desktop Viewer

- Web viewing

Measurement and Analysis

- Distances and positions

- Volumes and surfaces

- Input data resolution

- Photo-navigation tool

Bentley CONNECT

- Upload to Reality Data Management

- Reality mesh streaming from Reality Data Management

- Associate to CONNECT project

- CONNECT Advisor

Collaborate in real time

Capture and share real-time changes; collaborate on latest documents anywhere and anytime over mobile and desktop devices.

Connect project participants through an instant-on cloud service

Use a secure cloud-based portal to work from any location and gain built-in data backup and recovery, without needing to install or maintain any software. Connect your entire supply chain with ease, using the secure platform to efficiently collaborate without opening your firewall.

Find documents quickly

Access your latest files and favorite content based on your requirements for accurate document retrieval.

Employ trusted file sharing

Eliminate the redundancy and confusion often caused by documents stored on multiple sites and with different applications.

Put files in a project context

Create and manage file sharing with user, workgroup, and team folder structures.

Licensing and Subscription options

Choose What is Right for You

One-year license with training

Virtuoso Subscription – A popular choice for small and medium-sized businesses

Get access to software that comes with training – fast! Bentley’s eStore offers a convenient way to lease a 12-month license of Bentley software for a low, upfront cost. Every online purchase comes as a Virtuoso Subscription that includes training and auto-renewals. With no contract required, it’s easy to get started quickly.One-time purchase with support

Perpetual License with SELECT

A perpetual license of Bentley software is a one-time purchase, with a yearly maintenance subscription, called SELECT. This includes 24/7/365 technical support, learning resources, and the ability to exchange licenses for other software once a year. With SELECT, you will benefit from:- License pooling, so you can access your software from multiple computers.

- Access additional Bentley software with Term Licenses, which allow you to pay for what you use without the upfront cost of purchasing a perpetual license.